A Practical Approach to Integrating AI into Security Operations

Senior Advisor, CSA

AI-Enabled Security Advisory

Security leaders are under growing pressure to do more with AI. New tools promise faster investigations, better reporting, stronger intelligence, and more efficient operations. Some of that promise may be exaggerated, but this is not a ‘wait and see’ moment for security leaders. The organizations that will benefit most from these new technologies are the ones that begin now, with a disciplined approach to building the right foundation and integrating artificial intelligence in practical, governed ways even as the technology continues to evolve.

Over the past few years, one of my core responsibilities was tackling this issue head on. My mandate: Find ways to integrate AI into various security functions, investigations, compliance, and risk management.

In my experience, organizations get the best results from AI when they start with a clear understanding of the problem they want to address, strengthening the process around it, and defining how the new capability will actually work in practice. AI can absolutely improve performance, but it does not replace the need for sound operations. Organizations tend to get the most value from AI when it is supported by clear processes, strong governance, and a well-defined operating model.

AI introduces a level of ambiguity and experimentation that can feel counterintuitive in security environments, where leaders are trained to reduce uncertainty. Navigating that reality requires discipline, structure, and an end-to-end view of how AI supports the broader security operating model.

Start with the current state

Before evaluating an AI tool, organizations should first understand the problem they are trying to solve. This might be a lack of investigative consistency, lengthy review times, limited reporting, excessive administrative burden, limited intelligence capabilities, or poor use of existing systems. Identifying the problem is a reasonable place to begin, but leaders still need to understand what is driving the issue. Is the problem volume? Weak documentation? Inconsistent workflows? Undefined decision thresholds? Fragmented handoffs across teams? Those questions matter, because AI delivers the greatest value when it is applied to a clear, well-structured process with defined decision points and operating expectations.

A strong starting point is a current-state assessment that examines workflows, performance gaps, documentation quality, decision points, and related dependencies. That gives leaders a clearer view of where AI can create value where operational integration will matter most, what foundational improvements may be needed to support long-term success and what areas of the security program are mature enough for AI enhancement.

Strengthen the process before scaling the technology

One of the most common mistakes in AI adoption is treating implementation as mainly a technology implementation exercise rather than integrating AI into everyday security operations, including upstream work such as policy review, strategy development, and operational planning. Integration into legal, privacy, cybersecurity, procurement, and overall system compatibility all matter, but in security environments, operational readiness matters just as much. Policies, procedures, escalation paths, reporting standards, and documentation practices all shape whether AI will support the work effectively.

If those elements are immature, inconsistent, or unclear, AI is less likely to deliver sustainable value. That is why strengthening the operating foundation should come before broader deployment.

I have seen similar issues in other security solutions over the years. A tool may appear to address one problem, but if leaders do not think through how it changes surrounding workflows, it often creates new problems elsewhere. AI carries that same risk, only faster and at greater scale. That is why process review and operational discipline should come before broad deployment.

For security leaders, AI can help accelerate workflows, improve consistency, strengthen reporting, and reduce manual burden, but those gains are easier to realize when the surrounding operation is designed to support them.

Practical integration beyond the single use case

The value of AI in security rarely comes from one isolated function.

A capability may help with case summaries, incident intake, pattern recognition, reporting, or intelligence development, but leaders need to ask a broader question: how does this affect the rest of the operating environment?

An AI-enabled investigations tool, for example, may improve efficiency, but it can also affect legal review, HR coordination, documentation standards, and case defensibility. A triage capability may speed intake, but it can also change workload, escalation expectations, and oversight needs.

Those are operating model questions, not just technology questions, and they are central to making AI useful in a real-world security environment.

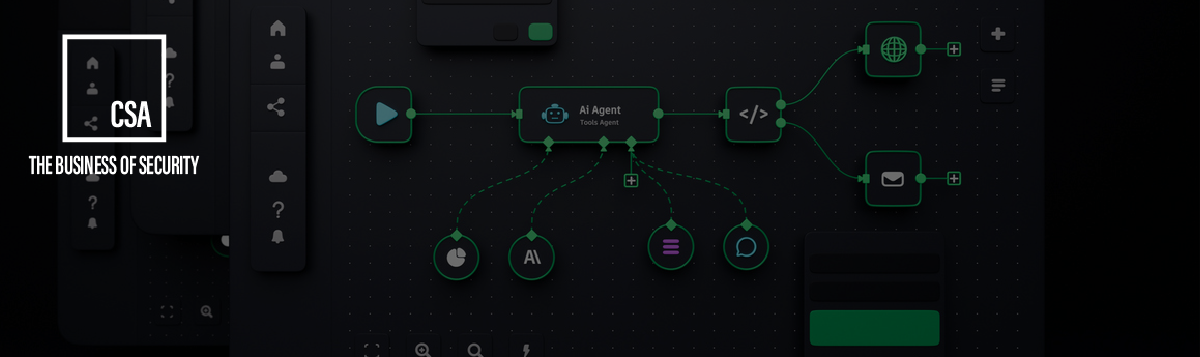

In many cases, the greatest value comes not from one isolated AI use case, but from how AI supports connected workflows across intake, investigations, intelligence, reporting, governance, and adjacent enterprise functions as part of an end-to-end security operating model. Understanding the impact AI has on connected workflows is how an organization begin to move from experimentation to practical integration across the security operating environment.

Pilot with real users

AI should not be rolled out broadly just because it works in a demo. In most environments, AI works best as a layer on top of existing systems, accelerating how people interact with data, documentation, and investigative workflows.

Security work still depends heavily on judgment, nuance, context, and discretion. Technology is valuable for speed, scale, and structure. AI is not a replacement for existing security systems. Rather, it acts as a force multiplier that accelerates how people interact with existing technologies and information. People remain essential for interpretation and decision-making. That is why human oversight must remain part of the model.

In practice, that means piloting AI in phases with real users across different levels of experience and technical comfort. That process helps leaders see where guidance needs to be more specific, where built-in controls are more useful than lengthy training, and where the workflow itself needs adjustment.

I learned that directly in my own work. In one environment, I developed a highly detailed training guide to cover a wide range of AI use cases. It was thorough, but it was also too much for many users. In a later implementation, we focused formal training on general guardrails and embedded more detailed guidance into the tool itself. That approach was more practical and more usable.

That kind of learning only happens when organizations test deliberately before scaling.

Build governance in from the start

AI implementation in security should be phased, structured, and governed.

When organizations move too quickly, usage becomes inconsistent, expectations become unclear, and oversight falls behind. In a security environment, that can create operational, legal, and reputational issues very quickly.

Governance should not be added later. It should be part of the operating model from the beginning, and include clear guardrails, documentation expectations, human review, defined decision authority, and an understanding of how performance will be monitored over time.

The goal is not simply to deploy a tool, but to build a capability that can support the business, stand up to scrutiny, and mature responsibly as the technology evolves. For many organizations, this starts with assessing readiness, clarifying the highest-value use cases, and building a practical roadmap for integrating AI into the broader security operating environment.

A practical path for security leaders

For most organizations, a disciplined approach to AI in security comes down to five steps:

- Assess the Current State

Start by evaluating the problem to be solved. Review the workflows involved, current performance gaps, documentation quality, decision points, system dependencies, and adjacent stakeholders. The goal is to understand whether the challenge is truly a technology issue, a process issue, an integration issue, or some combination of the three.

- Strengthen the Operating Foundation

Before introducing AI, review and update the surrounding processes and procedures. This includes escalation paths, documentation standards, governance expectations, operating rhythms, and the policies that shape how work is performed. AI delivers the greatest value when it is built on a sound operational base.

- Define the Integration Model

Determine where AI fits across the broader security environment and how it will operate end-to-end across connected workflows. Consider how it may affect intake, investigations, reporting, intelligence, training, Legal, HR, Compliance, and executive decision support. The value of AI is rarely limited to one isolated task. It is often greatest when AI supports connected workflows across systems and functions. This is also the stage where organizations should evaluate and choose the AI solution that best fits the use case, operating model, integration requirements, and governance expectations.

- Test with Humans in the Loop

Pilot use cases in structured phases with users across different levels of technical comfort and security expertise. This helps refine workflows, thresholds, usability, training needs, and escalation expectations while preserving the role of human judgment in decision-making.

- Implement with Governance and Iteration

Roll out in stages, build in clear guardrails, monitor performance, and adjust based on user behavior, operational results, and enterprise risk considerations. Governance should not be added later. It should be part of the operating model from the start.

What This Means for Security Leaders

AI is already changing how security work gets done. The real question is not whether organizations will use it; the question is whether they will move now, with enough discipline to make implementation effective.

The strongest AI-enabled security programs will not be the ones with the most tools. They will be the ones where leaders accept the ambiguity that comes with AI and develop an end-to-end strategy for how it supports the broader security operating environment.

That is how security organizations can move from AI interest to operational capability.

-

Matthew J. Logan is a Senior Advisor at Corporate Security Advisors, specializing in AI-Enabled Security Advisory Services. He brings nearly 20 years of experience leading work across enterprise investigations, asset protection strategy, intelligence, and operations within large, complex organizations. His background includes aligning security, investigations, and operational risk programs with broader business strategy, governance, and execution.

Speak to a Security Expert

Enter your information below to speak to a security expert on our team.